Marketing AI agents for customer targeting in telemarketing can also be easily implemented using the new library "smolagents." This looks promising!

1. Marketing AI Agent

To efficiently reach potential customers, it's necessary to target customers who are likely to purchase your products or services. Marketing activities directed at customers without needs are often wasteful and unsuccessful. However, identifying which customers to focus on from a large customer list beforehand is a challenging task. To meet the expectation of easily targeting customers without complex analysis, provided you have customer-related data at hand, we have implemented a marketing AI agent this time. Anyone with basic Python knowledge should be able to implement it without much difficulty. The secret to this lies in the latest framework "smolagents" (1), which we introduced previously. Please refer to the official documentation for details.

2. Agent Predicting Potential Customers for Deposit-Taking Telemarketing

Let's actually build an AI agent. The theme is "Predicting potential customers for deposit-taking telemarketing with an AI agent using smolagents." As before, by providing data, we want the AI agent itself to internally code using Python and automatically display "the top 10 customers most likely to be successfully reached by telemarketing."

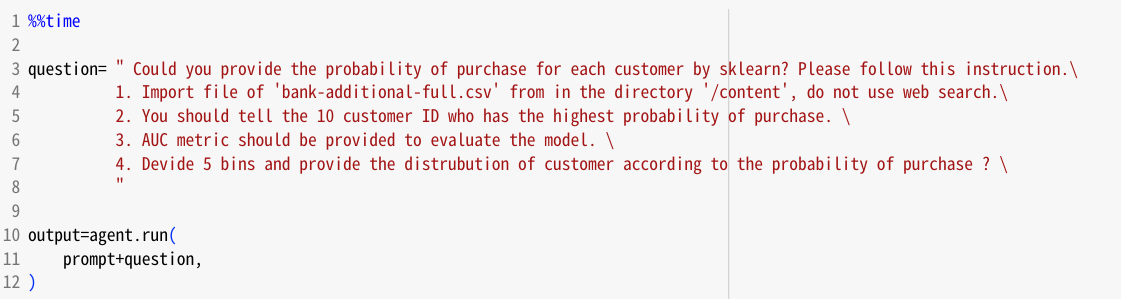

While the coding method should be referenced from the official documentation, here we will present what kind of prompt to write to make the AI agent predict potential customers for deposit-taking telemarketing. The key point, as before, is to instruct it to "use sklearn's HistGradientBoostingClassifier for data analysis." This is a gradient boosting library, highly regarded for its accuracy and ease of use.

Furthermore, as a question (instruction), we specifically add the instruction to calculate "the purchase probability of the 10 customers most likely to be successful." The input to the AI agent is in the form of "prompt + question."

Then, the AI agent automatically generates Python code like the following. The AI agent does this work instead of a human. And as a result, "the top 10 customers most likely to be successfully marketed to" are presented. Customers with a purchase probability close to 100%! Amazing!

"Top 10 customers most likely to be successfully marketed to"

In this way, the user only needs to instruct "tell me the top 10 customers most likely to be successful," and the AI agent writes the code to calculate the purchase probability for each customer. This method can also be applied to various other things. I'm looking forward to future developments.

3. Future Expectations for Marketing AI Agents

As before, we implemented it with "smolagents" this time as well. It's easy to implement, and although the behavior isn't perfect, it's reasonably stable, so we plan to actively use it in 2025 to develop various AI agents. The code from this time has been published as a notebook (2). Also, the data used this time is relatively simple demo data with over 40,000 samples, but given the opportunity, I would like to try how the AI agent behaves with larger and more complex data. With more data, the possibilities will increase accordingly, so we can expect even more. Please look forward to the next AI agent article. Stay tuned!

1) Introducing smolagents, a simple library to build agents, Aymeric Roucher, Merve Noyan, Thomas Wolf, Hugging Face, Dec 31,2024

2) https://github.com/TOSHISTATS/AI-agent-for-Marketing_20250125/blob/main/AI_agent_for_Marketing_20250125.ipynb

Notice: ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the codes and the software.